High Performance Computing (HPC) vs OnScale

The world is moving to Cloud based solutions for everything, and OnScale is at the forefront of this movement for Multiphysics simulations.

Latest News

March 11, 2020

OnScale has taken our powerful solvers and deployed them on a Cloud platform that offers the best performance, cost, and accessibility for engineers looking to simulate their toughest multiphysics problems.

Traditional High Performance Computing (HPC) hardware has numerous road blocks that make simulating large problems unfeasible. In this blog post we’ll take a look at all the ways OnScale offers solutions to these problems and helps engineers quickly obtain valuable data from their simulations.

Access Cloud Supercomputer Performance

With typical on premise HPC you’re always limited by the hardware that has been purchased. There are a fixed amount of cores, a fixed amount of memory, and a fixed number of software licenses with limits on the amount of cores that can be used (either explicitly by license or by practical limits to solver scalability).

OnScale removes all of these barriers.

The Cloud provides an unlimited amount of computational resources to meet any simulation need that you have. Want to solve 1,000 permutations of a design? You can simulate all of them simultaneously with OnScale and get the results back in the same time that it would take to solve one simulation (a 1,000X increase in speed).

OnScale GUI showing hundreds of simulations executing in parallel on OnScale Cloud. Each simulation uses four compute cores, so to replicate this using legacy desktop simulation, you would need several hundred machines (~1,000 cores), terabytes of RAM, and several hundred licenses of your desktop simulation product

Each simulation is automatically allocated the cores and memory required for it to solve efficiently, and because there are no software licenses, you are not limited to a certain number of parallel runs.

Want to take all of those resources and use them to solve one very large problem? OnScale’s distributed domain decomposition allows you to break up large problems over multiple machines for huge speed ups in simulation time. Large simulations can be solved by distributing the memory requirements among many machines, effectively eliminating any barriers that previously existed due to sheer problem size.

Control and Share Your Data, Anywhere

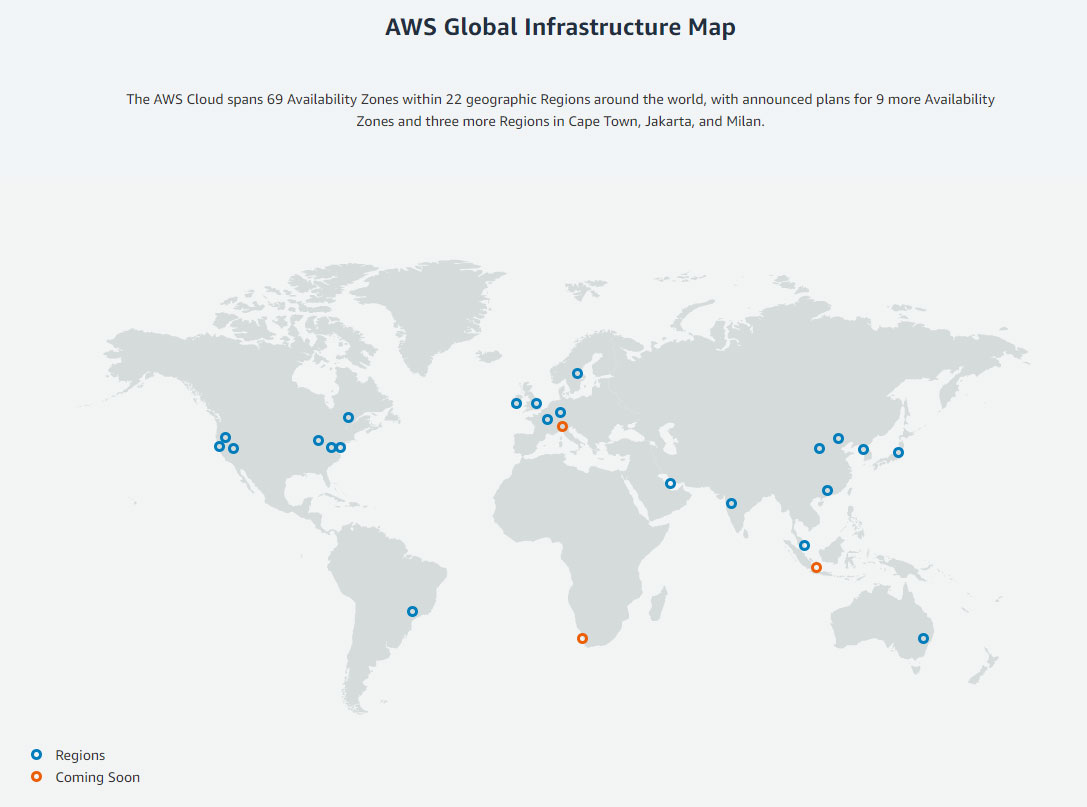

Accessing all this data quickly is aided by our Cloud platform, which is deployed on the industry leading Amazon Web Services (AWS). Wherever you are in the world there’s a data center nearby that provides minimal latency that will never slow you down.

Image: Massive (BDoF) MPI simulation showing the different regions (different colors based on MPI segmentation)

Reduce Cost with Cloud Simulation

The cost advantages of OnScale vs legacy on-premise HPC are easy to see. Local hardware requires a large upfront investment to purchase and setup, and requires constant monitoring and maintenance throughout its lifetime. When machines experience issues they need to be taken down and fixed, leading to costly delays that can wreak havoc on deadlines. Even when the machine is running smoothly it’s not always in use, meaning for large stretches of time it sits collecting dust without providing any return on the investment.

With OnScale, there is no upfront investment cost and no time required to set up. Simply download the software and start submitting simulations to the cloud. Through AWS OnScale can provide instant access to the most powerful network of data centers in the world. The vast scope of this network also ensures its reliability. In the event that a particular machine goes down OnScale is able to seamlessly switch resources so that you’re always able to submit simulations without ever noticing an interruption. All of the backend IT infrastructure is taken care of, freeing you up to focus on what really matters.

Improve Accessibility for Teams

Building a functional Cloud platform is great, but we aim to make sure that it’s as easy to use as possible as well. You’re no longer tied to a specific machine on a specific company network, so you can connect to OnScale and work from anywhere.

As long as you have an internet connection you have the power of the entire Cloud at your fingertips. You’ll never hit a queue and have to wait or fight for priority to use the HPC hardware. OnScale is always available and always running, allowing you to submit simulations, turn off your local machine or connection, and then come back when the simulation is done to view the results.

As a Cloud company we also take care of all of the back end management for you. When we release a new feature it’s automatically deployed to the cloud, giving you instant access. Legacy HPC requires constant care to keep software and license up to date. All of our user management is handled through our convenient portal, meaning there’s no IT infrastructure needed to manage the software.

Business is moving to the cloud, and here at OnScale we’re sure you won’t ever want to work another way once you’ve tried it out and experienced the benefits over legacy HPC.

More OnScale Coverage

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News