Source Getty Images

Latest in High–performance Computing HPC

High–performance Computing HPC Resources

UberCloud

Latest News

October 10, 2019

Over the past two years we have seen tremendous growing interest in cloud computing in the engineering community. According to Hyperion (the former HPC High-Performance Computing Group at IDC), over 70% of HPC sites run some simulation jobs in public clouds today, up from 13% in 2011, and over 10% of all HPC jobs are now running in clouds like Microsoft Azure, Amazon AWS and Google Cloud. Although the cloud “on-boarding” process is well understood, major challenges still exist. Obstacles include on-demand independent software vendor software licenses for the cloud, multi-gigabyte data transfer, security concerns, lack of cloud expertise and potentially losing control over your cloud-based assets.

In 2015, based on our experience gained from the previous 100+ cloud experiments, we reached an important milestone when we introduced novel software container technology based on Docker containers, which we applied to complex engineering CAE workflows. Use of these HPC containers by our cloud experiment teams dramatically shortened cloud experiment times from months to days. Today, major cloud hurdles are well understood and have mostly been resolved, and access to and use of cloud resources became as easy as the use of in-house desktop systems.

Now, our new UberCloud 2019 annual compendium describes 13 technical computing applications in the cloud. Like its predecessors, this year’s edition draws from a select group of projects undertaken as part of the UberCloud Experiment, sponsored again by Hewlett Packard Enterprise, Intel and Digital Engineering Magazine. The Compendium is a way of sharing our cloud experience with the broader engineering community. The Compendium has just been released and is now available for free download.

These new case studies include:

- Establishing the Design Space of Sparged Bioreactors;

- Moisture and Moisture Transfer Within a Residential Condo Tower;

- 9th Graders Design Flying Boats in ANSYS Discovery Live;

- CFX Aerodynamics of a 3D Wing;

- Race Car Airflow Simulation;

- Deep Learning for Fluid Flow Prediction;

- Machine Learning Model for Predictive Maintenance;

- Deep Learning in CFD;

- Fluid-Structure Interaction of Aortic Heart Valves;

- Atrial Appendage Occluder Device using the Living Heart Model;

- Migrating Engineering Workloads for FLSmidth A/S; and,

- Overhead Electrical Power Lines in Preventive Maintenance.

In the following we have briefly summarized four of these CAE UberCloud Experiments to demonstrate the power of cloud for engineering and scientific applications.

Establishing the Design Space of a Sparged Bioreactor on Azure

Bioreactors are the heart of every pharmaceutical manufacturing process. One goal of a bioreactor is to operate at process conditions that provide sufficient oxygen to the suspended cells. The design space of a bioreactor defines the relationship between the input and output parameters of the cell culture process. This knowledge is used to establish and maintain consistent process (and therefore product) quality. The design space typically relies on a design of experiments (DOE) approach to characterize the interdependencies between tank design and process operating conditions.

This can be an expensive, time-consuming exercise to perform in the lab, especially at manufacturing scale. Therefore, the pharmaceutical industry has adopted computational fluid dynamics (CFD) modeling as a cost-saving option that can also reduce the risk associated with scale-up and day-to-day operations. However, the simulation time and compute resources required to explore the relationships between various process parameters can be quite extensive.

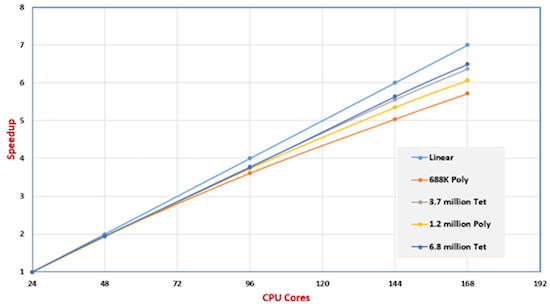

Comparison of solution speed scale-up for a different number of cores and with different mesh densities. All images courtesy of UberCloud.

Comparison of solution speed scale-up for a different number of cores and with different mesh densities. All images courtesy of UberCloud.Therefore, Sravan Kumar Nallamothu, senior application engineer, and Marc Horner, PhD, technical lead, Healthcare, at ANSYS, explored the use of HPC to establish the design space of a production scale sparged bioreactor. They developed and executed the simulation framework on Azure Cloud resources running the ANSYS Fluent container from UberCloud. In Fig. 1, air bubbles undergo breakup near the impeller blades and coalesce in the circulation regions with low turbulent dissipation rates. This leads to bubble size variation throughout the tank. Since the interfacial area depends on the bubble size, bubble size distribution plays a critical role in oxygen mass transfer.

Fluid-Structure Interaction of Artificial Aortic Heart Valves in the Advania Data Center Cloud

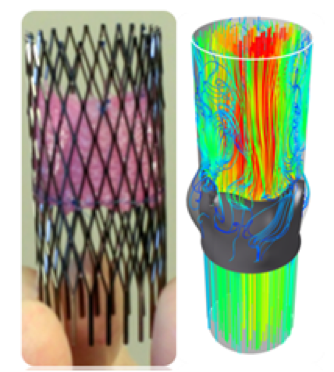

This cloud experiment was performed by Deepanshu Sodhani, R&D project manager for Engineering, Modeling and Design at enmodes GmbH, a worldwide service provider in the field of medical technology with special expertise in the field of conception, research and development of medical devices. In this project, enmodes has been supported by Dassault Systèmes SIMULIA, Capvidia/FlowVision, Advania Data Centers and UberCloud, and sponsored by Hewlett Packard Enterprise and Intel. It is based on the development of Dassault’s Living Heart Model applied to Fluid-Structure Interaction of Artificial Aortic Heart Valves.

The FSI simulation model was computed on the cloud computing services provided by UberCloud, using Abaqus from Simulia as the structural solver and FlowVision from Capvidia as the fluid solver. Scalability of the application up to 64 CPU cores has been studied with increasing number of cores.

The engineered valve is sutured into a self-expandable nitinol stent (left). Velocity streamlines through computational domain for half-open valve (right).

The engineered valve is sutured into a self-expandable nitinol stent (left). Velocity streamlines through computational domain for half-open valve (right).The model was further evaluated to predict the risk parameters to understand the correlation between the level of fidelity and resulting accuracy by carrying out a mesh convergence study over three fluid domain mesh densities. The valve was modeled using approximately 90,000 finite elements, whereas the fluid domain, was modeled using approximately 300,000 (mesh 1), 900,000 (mesh 2) and 2,700,000 (mesh 3) finite volume cells.

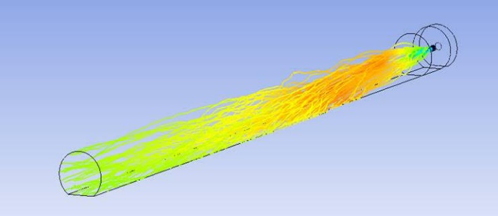

Migrating Engineering Workloads to the Azure Cloud—An FLSmidth Case Study

FLSmidth is a leading supplier of engineering, equipment and service solutions to customers in mining and cement industries. In September 2018, Sam Zakrzewski from FLSmidth approached UberCloud to perform an extensive Proof of Concept to evaluate whether the timing was right to consider moving their engineering simulation workload to the Cloud. The project team designed and configure FLSmidth’s Azure cloud environment and benchmarked applications to understand engineering simulation usage of Cloud HPC resources and related workflows. Multi-node runs showed excellent scalability when using many compute nodes for large simulations. The ability to scale up to large number of resources enabled FLSmidth to run multiple jobs simultaneously reducing multi-job duration and significantly increasing HPC throughput for engineers.

The FLSmidth engineering team, which is distributed between locations in Copenhagen, India, South Africa and Brazil, drafted a list of requirements of moving different simulation scenarios using ANSYS CFX and ESSS Rocky to Azure. FLSmidth currently has its own on-premises Intel Haswell-based HPE cluster with 512 cores and Infiniband FDR for its 20 habitual users. In the next step, it wishes to increase its user base and upgrade the on-premises environment with cloud bursting for mission-critical applications in CFD (multi-phase, combustion) using ANSYS CFX and Fluent, STAR CCM+ and ANSYS Mechanical (static, thermal, modal, fatigue). In addition, the company is applying the discrete element method (DEM) to simulate granular and discontinuous materials with ESSS Rocky.

Overhead Electrical Power Lines Lifespan Assessment in Preventive Maintenance in the Advania Data Center Cloud

Réseau de Transport d'Électricité (RTE) is the operator of the high-voltage electricity transmission network in France. With more than 100,000 kilometers of high voltage overhead lines, this network is the largest in Europe. Management of these physical assets is a top priority. The challenges are particularly high in the field of overhead conductors, as the costs of replacing them will reach several hundred million Euros annually in the coming years. Preventive maintenance plays a major role in providing uninterrupted services and reducing outages and costs due to equipment fatigue and failures.

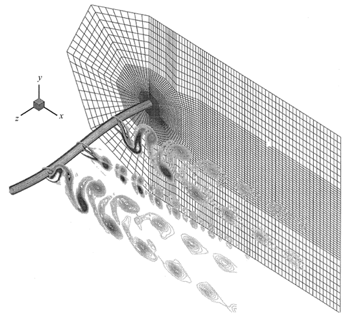

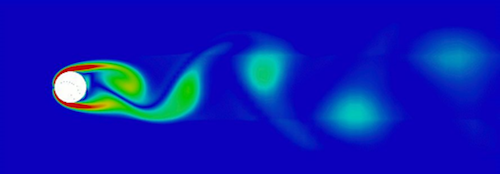

In this project, RTE studied the phenomenon of vortex-induced vibrations of overhead power lines. It is essential to predict the line vibrations for a better estimation of the lifespan of overhead lines. We analyzed lift and drag forces created by low wind speeds, and line vibrations due to cyclic stresses that cause fatigue failure of the wires. Researchers developed a fluid-structure interaction process using CFD Code_Saturne simulating the airflow around the overhead lines, and the overall line dynamics captured by an in-house solid solver (FEM). The code coupling was used to determine interaction between the wind and the power line. The solution used a Quasi-3D method to simplify the coupling problem, with CFD performed separately for a series of sections of the overhead line (fluid solver), the overall line dynamics constructed from the FEM solver, and message-passing MPI communication between fluid and solid solvers.

The final simulation ran on a 1,000-core 32-node cluster on the Advania Data Centers cloud. For reaching near-steady vibrations after about 55,000 computational timesteps the simulation took 431 hours or 18 days. The 55,000 computational timesteps correspond to a physical time of 55 seconds. At that time, the vibrations become stable, i.e., the vibration magnitude becomes constant. The present 1,000-core run simulated a 325 m overhead line represented by the 1,000 cylinders.

Vortex-shedding around a 2D section of an overhead electrical power transmission line.

Vortex-shedding around a 2D section of an overhead electrical power transmission line. More UberCloud Coverage

For More Info

Wolfgang Gentzsch is co-founder and president of UberCloud, the community, marketplace, and software provider for engineers and scientists to discover, try, and buy complete hardware/software solutions in the cloud. Wolfgang is a passionate engineer and high-performance engineering computing veteran with 40 years of experience as a researcher, university professor, and serial entrepreneur. He can be reached at wolfgang.gentzsch@theubercloud.com. Extended case studies of these examples can be downloaded from the UberCloud site.

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News